🤠

mediapipe + streamlit で BlendShape 推定するメモ

2024 年 4 月時点(v3 preview?)を想定します.

Mediapipe 側の python での呼び出しがちょこちょこ変わっているようです.

model は blendshape weight 推定するのも face_landmarker.task の一つのモデルにまとまっているようです.

とりま試す

streamlit で可視化します.

pyplot 対応とかされているのでぺろっとできます.

import mediapipe as mp

from mediapipe import solutions

from mediapipe.framework.formats import landmark_pb2

import matplotlib.pyplot as plt

import cv2

import numpy as np

import streamlit as st

from PIL import Image

model_path="face_landmarker.task"

BaseOptions = mp.tasks.BaseOptions

FaceLandmarker = mp.tasks.vision.FaceLandmarker

FaceLandmarkerOptions = mp.tasks.vision.FaceLandmarkerOptions

VisionRunningMode = mp.tasks.vision.RunningMode

options = FaceLandmarkerOptions(

base_options=BaseOptions(model_asset_path=model_path),

output_face_blendshapes=True,

running_mode=VisionRunningMode.IMAGE)

img_filename = "alicia2.jpg"

mp_img = mp.Image.create_from_file(img_filename)

# for st

pil_img = Image.open(img_filename)

st.image(pil_img)

with FaceLandmarker.create_from_options(options) as landmarker:

face_landmarker_result = landmarker.detect(mp_img)

st.write(face_landmarker_result)

# From Mediapipe exmaple

def draw_landmarks_on_image(rgb_image, detection_result):

face_landmarks_list = detection_result.face_landmarks

annotated_image = np.copy(rgb_image)

# Loop through the detected faces to visualize.

for idx in range(len(face_landmarks_list)):

face_landmarks = face_landmarks_list[idx]

# Draw the face landmarks.

face_landmarks_proto = landmark_pb2.NormalizedLandmarkList()

face_landmarks_proto.landmark.extend([

landmark_pb2.NormalizedLandmark(x=landmark.x, y=landmark.y, z=landmark.z) for landmark in face_landmarks

])

solutions.drawing_utils.draw_landmarks(

image=annotated_image,

landmark_list=face_landmarks_proto,

connections=mp.solutions.face_mesh.FACEMESH_TESSELATION,

landmark_drawing_spec=None,

connection_drawing_spec=mp.solutions.drawing_styles

.get_default_face_mesh_tesselation_style())

solutions.drawing_utils.draw_landmarks(

image=annotated_image,

landmark_list=face_landmarks_proto,

connections=mp.solutions.face_mesh.FACEMESH_CONTOURS,

landmark_drawing_spec=None,

connection_drawing_spec=mp.solutions.drawing_styles

.get_default_face_mesh_contours_style())

solutions.drawing_utils.draw_landmarks(

image=annotated_image,

landmark_list=face_landmarks_proto,

connections=mp.solutions.face_mesh.FACEMESH_IRISES,

landmark_drawing_spec=None,

connection_drawing_spec=mp.solutions.drawing_styles

.get_default_face_mesh_iris_connections_style())

return annotated_image

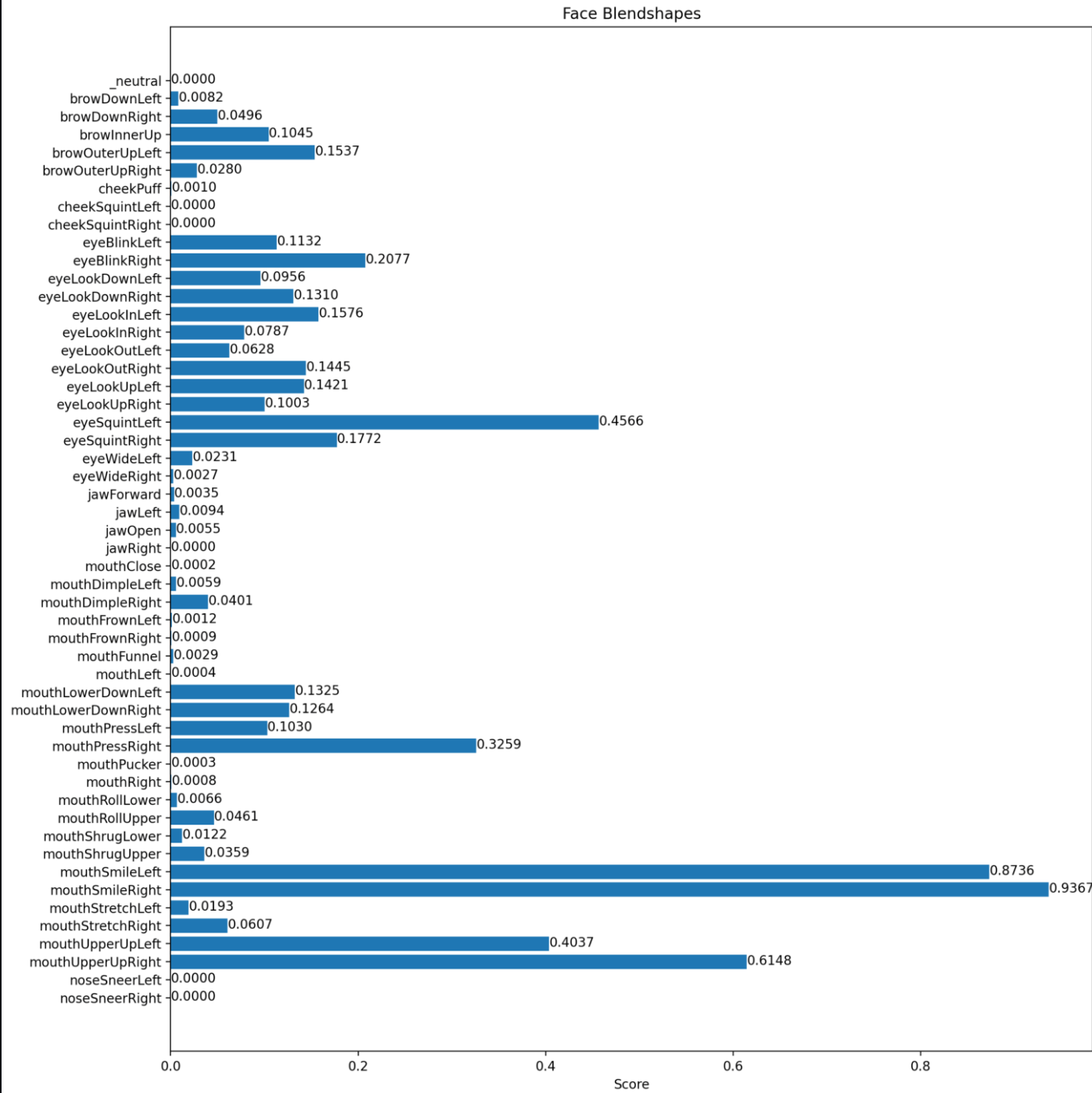

def plot_face_blendshapes_bar_graph(face_blendshapes):

# Extract the face blendshapes category names and scores.

face_blendshapes_names = [face_blendshapes_category.category_name for face_blendshapes_category in face_blendshapes]

face_blendshapes_scores = [face_blendshapes_category.score for face_blendshapes_category in face_blendshapes]

# The blendshapes are ordered in decreasing score value.

face_blendshapes_ranks = range(len(face_blendshapes_names))

fig, ax = plt.subplots(figsize=(12, 12))

bar = ax.barh(face_blendshapes_ranks, face_blendshapes_scores, label=[str(x) for x in face_blendshapes_ranks])

ax.set_yticks(face_blendshapes_ranks, face_blendshapes_names)

ax.invert_yaxis()

# Label each bar with values

for score, patch in zip(face_blendshapes_scores, bar.patches):

plt.text(patch.get_x() + patch.get_width(), patch.get_y(), f"{score:.4f}", va="top")

ax.set_xlabel('Score')

ax.set_title("Face Blendshapes")

plt.tight_layout()

#plt.show()

st.pyplot(fig)

annotated_image = draw_landmarks_on_image(mp_img.numpy_view(), face_landmarker_result)

st.image(annotated_image)

plot_face_blendshapes_bar_graph(face_landmarker_result.face_blendshapes[0])

Voila~

TODO

- 動画やカメラ画像対応する

- 出力を JSON-RPC などで出して, 3D model の変形に使う

Discussion