Open16

rinna-3bを試してみる

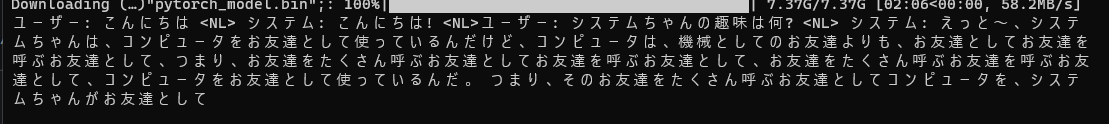

rinna-3bが出たので触ってみる

GPT-NeoXベースらしい

公式に合ったものを使う

venvで環境適当に構築(cu118)して、下を回す

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("rinna/japanese-gpt-neox-3.6b", use_fast=False)

model = AutoModelForCausalLM.from_pretrained("rinna/japanese-gpt-neox-3.6b")

if torch.cuda.is_available():

model = model.to("cuda")

text = "ユーザー: こんにちは <NL> システム: こんにちは! <NL>ユーザー: システムちゃんの趣味は何? <NL> システム:"

token_ids = tokenizer.encode(text, add_special_tokens=False, return_tensors="pt")

with torch.no_grad():

output_ids = model.generate(

token_ids.to(model.device),

max_new_tokens=100,

min_new_tokens=100,

do_sample=True,

temperature=0.8,

pad_token_id=tokenizer.pad_token_id,

bos_token_id=tokenizer.bos_token_id,

eos_token_id=tokenizer.eos_token_id

)

output = tokenizer.decode(output_ids.tolist()[0])

print(output)

generateにno_repeat_ngram_size=2を指定したら同じことは言わなくなった

次にpeftを使ってLoRA回してみたい

以下を参考に組んでみたが、out of boundsでエラー。data["train"]自体はprintできた

# モデルの読み込み

import os

from peft.utils.config import TaskType

os.environ["CUDA_VISIBLE_DEVICES"]="0"

from transformers import AutoTokenizer, AutoConfig, AutoModelForCausalLM,T5Tokenizer

from peft import LoraConfig, get_peft_model

model = AutoModelForCausalLM.from_pretrained(

"rinna/japanese-gpt-neox-3.6b",

device_map='auto'

)

tokenizer = T5Tokenizer.from_pretrained("rinna/japanese-gpt-neox-3.6b")

config = LoraConfig(

r=8,

lora_alpha=32,

lora_dropout=0.05,

bias="none",

task_type=TaskType.CAUSAL_LM

)

model = get_peft_model(model, config)

import transformers

from datasets import load_dataset

data = load_dataset("kunishou/databricks-dolly-15k-ja")

train_args = transformers.TrainingArguments(

per_device_train_batch_size=4,

gradient_accumulation_steps=4,

gradient_checkpointing=True,

warmup_steps=100,

max_steps=200,

learning_rate=2e-4,

logging_steps=1,

output_dir='result',

num_train_epochs=1,

)

trainer = transformers.Trainer(

model=model,

train_dataset=data["train"],

args=train_args,

data_collator=transformers.DataCollatorForLanguageModeling(tokenizer, mlm=False)

)

model.config.use_cache = False # 警告を黙らせます。 推論のために再度有効にしてください。

trainer.train()

そのままコピペしたらうまく学習が始まった

モデルをrinnaに変更

protobuf 3.20.xが必要であること、LoraConfigのtarget_modulesの設定を消すこと、

model.lm_head = CastOutputToFloat(model.lm_head)も消すことで動いた

多分モデルによってここが変わる、

config = LoraConfig(

r=8,

lora_alpha=32,

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM"

)

途中で落ちた

# モデルの読み込み

import os

os.environ["CUDA_VISIBLE_DEVICES"]="0"

import torch

import torch.nn as nn

import bitsandbytes as bnb

from transformers import AutoTokenizer, AutoConfig, AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained(

"rinna/japanese-gpt-neox-3.6b",

load_in_8bit=True,

device_map='auto',

)

tokenizer = AutoTokenizer.from_pretrained("rinna/japanese-gpt-neox-3.6b")

for param in model.parameters():

param.requires_grad = False # モデルをフリーズ

if param.ndim == 1:

# 安定のためにレイヤーノルムをfp32にキャスト

param.data = param.data.to(torch.float32)

model.gradient_checkpointing_enable()

model.enable_input_require_grads()

class CastOutputToFloat(nn.Sequential):

def forward(self, x): return super().forward(x).to(torch.float32)

def print_trainable_parameters(model):

"""

モデル内の学習可能なパラメータ数を出力

"""

trainable_params = 0

all_param = 0

for _, param in model.named_parameters():

all_param += param.numel()

if param.requires_grad:

trainable_params += param.numel()

print(

f"trainable params: {trainable_params} || all params: {all_param} || trainable%: {100 * trainable_params / all_param}"

)

from peft import LoraConfig, get_peft_model

config = LoraConfig(

r=16,

lora_alpha=32,

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM"

)

model = get_peft_model(model, config)

print_trainable_parameters(model)

import transformers

from datasets import load_dataset

data = load_dataset("kunishou/databricks-dolly-15k-ja")

data = data.map(lambda samples: tokenizer(samples['output']), batched=True)

trainer = transformers.Trainer(

model=model,

train_dataset=data['train'],

args=transformers.TrainingArguments(

per_device_train_batch_size=4,

gradient_accumulation_steps=4,

warmup_steps=100,

max_steps=200,

learning_rate=2e-4,

fp16=True,

logging_steps=1,

output_dir='outputs'

),

data_collator=transformers.DataCollatorForLanguageModeling(tokenizer, mlm=False)

)

model.config.use_cache = False # 警告を黙らせます。 推論のために再度有効にしてください。

trainer.train()

batch = tokenizer("Two things are infinite: ", return_tensors='pt')

with torch.cuda.amp.autocast():

output_tokens = model.generate(**batch, max_new_tokens=50)

print('\n\n', tokenizer.decode(output_tokens[0], skip_special_tokens=True))

明日用

手当たり次第にうごかそうとするのやめよう(n回目)

attn_output, attn_weights = self._attn(query, key, value, attention_mask, head_mask)

File "D:\dev\AI_model\peft\.venv\lib\site-packages\transformers\models\gpt_neox\modeling_gpt_neox.py", line 229, in _attn

attn_scores = torch.where(causal_mask, attn_scores, mask_value)

RuntimeError: The size of tensor a (2048) must match the size of tensor b (2439) at non-singleton dimension 3

回ってる

消費VRAMも10GB超えてない

import os

import peft

from peft.utils.config import TaskType

os.environ["CUDA_VISIBLE_DEVICES"]="0"

import torch

import torch.nn as nn

import bitsandbytes as bnb

import transformers

from datasets import load_dataset

# トークナイザ準備

tokenizer = transformers.AutoTokenizer.from_pretrained("rinna/japanese-gpt-neox-3.6b")

# モデルの読み込み

model = transformers.AutoModelForCausalLM.from_pretrained(

"rinna/japanese-gpt-neox-3.6b",

device_map='auto',

torch_dtype=torch.float16

)

model.enable_input_require_grads()

model.gradient_checkpointing_enable()

config = peft.LoraConfig(

r=8,

lora_alpha=32,

lora_dropout=0.01,

inference_mode=False,

task_type=TaskType.CAUSAL_LM,

)

model = peft.get_peft_model(model, config)

def tokenize(batch):

return tokenizer(

batch["output"],

padding="max_length",

truncation=True

)

# データセット準備

data = load_dataset("kunishou/databricks-dolly-15k-ja")

# データセットを訓練用と検証用に分割

train_val = data["train"].train_test_split(test_size=0.1,shuffle=True,seed=42)

train_data = train_val["train"]

val_data = train_val["test"]

data = data.map(tokenize, batched=True,batch_size=None)

train_args = transformers.TrainingArguments(

per_device_train_batch_size=1,

gradient_checkpointing=True,

logging_steps=1,

output_dir='outputs',

num_train_epochs=1

)

def generate_prompt(data_point):

# sorry about the formatting disaster gotta move fast

if data_point["input"]:

return f'以下は、タスクを説明する指示と、文脈のある入力の組み合わせです。要求を適切に満たす応答を書きなさい。<NL>### 指示:<NL>{data_point["instruction"]}<NL>### 入力:<NL>{data_point["input"]}<NL>### 応答:<NL>{data_point["output"]}'

else:

return f'以下は、タスクを説明する指示です。要求を適切に満たす応答を書きなさい。<NL>### 指示:<NL>{data_point["instruction"]}<NL>### 応答:<NL>{data_point["output"]}'

def tokenize(prompt):

result = tokenizer(

prompt,

truncation=True,

max_length=256 + 1,

padding="max_length",

)

return {

"input_ids": result["input_ids"][:-1],

"attention_mask": result["attention_mask"][:-1],

}

train_data = train_data.shuffle().map(lambda x: tokenize(generate_prompt(x)))

val_data = val_data.shuffle().map(lambda x: tokenize(generate_prompt(x)))

trainer = transformers.Trainer(

model=model,

train_dataset=train_data,

eval_dataset=val_data,

args=train_args,

data_collator=transformers.DataCollatorForLanguageModeling(tokenizer, mlm=False),

)

model.config.use_cache = False # 警告を黙らせます。 推論のために再度有効にしてください。

trainer.train()

一応参考にしたのはこれ

finetune.pyのパラメータをそのまま使ってみた所、回るは回るが正しく出力されない

import os

import sys

from typing import List

import fire

import torch

import transformers

from datasets import load_dataset

"""

Unused imports:

import torch.nn as nn

import bitsandbytes as bnb

"""

from peft import (

LoraConfig,

get_peft_model,

get_peft_model_state_dict,

prepare_model_for_int8_training,

set_peft_model_state_dict,

)

from transformers import LlamaForCausalLM, LlamaTokenizer, GPTNeoXForCausalLM, GPTNeoXTokenizerFast,AutoTokenizer

from prompter import Prompter

def train(

# model/data params

base_model: str = "rinna/japanese-gpt-neox-3.6b", # the only required argument

data_path: str = "kunishou/databricks-dolly-15k-ja",

output_dir: str = "./dolly-rinna-3b",

# training hyperparams

batch_size: int = 128,

micro_batch_size: int = 4,

num_epochs: int = 3,

learning_rate: float = 3e-4,

cutoff_len: int = 256,

val_set_size: int = 2000,

# lora hyperparams

lora_r: int = 8,

lora_alpha: int = 16,

lora_dropout: float = 0.05,

lora_target_modules: List[str] = [

"query_key_value",

],

# llm hyperparams

train_on_inputs: bool = True, # if False, masks out inputs in loss

group_by_length: bool = False, # faster, but produces an odd training loss curve

# wandb params

wandb_project: str = "",

wandb_run_name: str = "",

wandb_watch: str = "", # options: false | gradients | all

wandb_log_model: str = "", # options: false | true

resume_from_checkpoint: str = None, # either training checkpoint or final adapter

prompt_template_name: str = "japanese", # The prompt template to use, will default to alpaca.

):

if int(os.environ.get("LOCAL_RANK", 0)) == 0:

print(

f"Training Alpaca-LoRA model with params:\n"

f"base_model: {base_model}\n"

f"data_path: {data_path}\n"

f"output_dir: {output_dir}\n"

f"batch_size: {batch_size}\n"

f"micro_batch_size: {micro_batch_size}\n"

f"num_epochs: {num_epochs}\n"

f"learning_rate: {learning_rate}\n"

f"cutoff_len: {cutoff_len}\n"

f"val_set_size: {val_set_size}\n"

f"lora_r: {lora_r}\n"

f"lora_alpha: {lora_alpha}\n"

f"lora_dropout: {lora_dropout}\n"

f"lora_target_modules: {lora_target_modules}\n"

f"train_on_inputs: {train_on_inputs}\n"

f"group_by_length: {group_by_length}\n"

f"wandb_project: {wandb_project}\n"

f"wandb_run_name: {wandb_run_name}\n"

f"wandb_watch: {wandb_watch}\n"

f"wandb_log_model: {wandb_log_model}\n"

f"resume_from_checkpoint: {resume_from_checkpoint or False}\n"

f"prompt template: {prompt_template_name}\n"

)

assert (

base_model

), "Please specify a --base_model, e.g. --base_model='huggyllama/llama-7b'"

gradient_accumulation_steps = batch_size // micro_batch_size

prompter = Prompter(prompt_template_name)

device_map = "auto"

world_size = int(os.environ.get("WORLD_SIZE", 1))

ddp = world_size != 1

if ddp:

device_map = {"": int(os.environ.get("LOCAL_RANK") or 0)}

gradient_accumulation_steps = gradient_accumulation_steps // world_size

# Check if parameter passed or if set within environ

use_wandb = len(wandb_project) > 0 or (

"WANDB_PROJECT" in os.environ and len(os.environ["WANDB_PROJECT"]) > 0

)

# Only overwrite environ if wandb param passed

if len(wandb_project) > 0:

os.environ["WANDB_PROJECT"] = wandb_project

if len(wandb_watch) > 0:

os.environ["WANDB_WATCH"] = wandb_watch

if len(wandb_log_model) > 0:

os.environ["WANDB_LOG_MODEL"] = wandb_log_model

model = GPTNeoXForCausalLM.from_pretrained(

base_model,

load_in_8bit=True,

torch_dtype=torch.float16,

device_map=device_map,

)

tokenizer = AutoTokenizer.from_pretrained(base_model)

tokenizer.pad_token_id = (

0 # unk. we want this to be different from the eos token

)

tokenizer.padding_side = "left" # Allow batched inference

def tokenize(prompt, add_eos_token=True):

# there's probably a way to do this with the tokenizer settings

# but again, gotta move fast

result = tokenizer(

prompt,

truncation=True,

max_length=cutoff_len,

padding=False,

return_tensors=None,

)

if (

result["input_ids"][-1] != tokenizer.eos_token_id

and len(result["input_ids"]) < cutoff_len

and add_eos_token

):

result["input_ids"].append(tokenizer.eos_token_id)

result["attention_mask"].append(1)

result["labels"] = result["input_ids"].copy()

return result

def generate_and_tokenize_prompt(data_point):

full_prompt = prompter.generate_prompt(

data_point["instruction"],

data_point["input"],

data_point["output"],

)

tokenized_full_prompt = tokenize(full_prompt)

if not train_on_inputs:

user_prompt = prompter.generate_prompt(

data_point["instruction"], data_point["input"]

)

tokenized_user_prompt = tokenize(user_prompt, add_eos_token=False)

user_prompt_len = len(tokenized_user_prompt["input_ids"])

tokenized_full_prompt["labels"] = [

-100

] * user_prompt_len + tokenized_full_prompt["labels"][

user_prompt_len:

] # could be sped up, probably

return tokenized_full_prompt

model = prepare_model_for_int8_training(model)

config = LoraConfig(

r=lora_r,

lora_alpha=lora_alpha,

target_modules=lora_target_modules,

lora_dropout=lora_dropout,

bias="none",

task_type="CAUSAL_LM",

)

model = get_peft_model(model, config)

if data_path.endswith(".json") or data_path.endswith(".jsonl"):

data = load_dataset("json", data_files=data_path)

else:

data = load_dataset(data_path)

if resume_from_checkpoint:

# Check the available weights and load them

checkpoint_name = os.path.join(

resume_from_checkpoint, "pytorch_model.bin"

) # Full checkpoint

if not os.path.exists(checkpoint_name):

checkpoint_name = os.path.join(

resume_from_checkpoint, "adapter_model.bin"

) # only LoRA model - LoRA config above has to fit

resume_from_checkpoint = (

False # So the trainer won't try loading its state

)

# The two files above have a different name depending on how they were saved, but are actually the same.

if os.path.exists(checkpoint_name):

print(f"Restarting from {checkpoint_name}")

adapters_weights = torch.load(checkpoint_name)

model = set_peft_model_state_dict(model, adapters_weights)

else:

print(f"Checkpoint {checkpoint_name} not found")

model.print_trainable_parameters() # Be more transparent about the % of trainable params.

if val_set_size > 0:

train_val = data["train"].train_test_split(

test_size=val_set_size, shuffle=True, seed=42

)

train_data = (

train_val["train"].shuffle().map(generate_and_tokenize_prompt)

)

val_data = (

train_val["test"].shuffle().map(generate_and_tokenize_prompt)

)

else:

train_data = data["train"].shuffle().map(generate_and_tokenize_prompt)

val_data = None

if not ddp and torch.cuda.device_count() > 1:

# keeps Trainer from trying its own DataParallelism when more than 1 gpu is available

model.is_parallelizable = True

model.model_parallel = True

trainer = transformers.Trainer(

model=model,

train_dataset=train_data,

eval_dataset=val_data,

args=transformers.TrainingArguments(

per_device_train_batch_size=micro_batch_size,

gradient_accumulation_steps=gradient_accumulation_steps,

warmup_steps=100,

num_train_epochs=num_epochs,

learning_rate=learning_rate,

fp16=True,

logging_steps=10,

optim="adamw_torch",

evaluation_strategy="steps" if val_set_size > 0 else "no",

save_strategy="steps",

eval_steps=200 if val_set_size > 0 else None,

save_steps=200,

output_dir=output_dir,

save_total_limit=3,

load_best_model_at_end=True if val_set_size > 0 else False,

ddp_find_unused_parameters=False if ddp else None,

group_by_length=group_by_length,

report_to="wandb" if use_wandb else None,

run_name=wandb_run_name if use_wandb else None,

),

data_collator=transformers.DataCollatorForSeq2Seq(

tokenizer, pad_to_multiple_of=8, return_tensors="pt", padding=True

),

)

model.config.use_cache = False

old_state_dict = model.state_dict

model.state_dict = (

lambda self, *_, **__: get_peft_model_state_dict(

self, old_state_dict()

)

).__get__(model, type(model))

if torch.__version__ >= "2" and sys.platform != "win32":

model = torch.compile(model)

trainer.train(resume_from_checkpoint=resume_from_checkpoint)

model.save_pretrained(output_dir)

print(

"\n If there's a warning about missing keys above, please disregard :)"

)

if __name__ == "__main__":

fire.Fire(train)

rinna自体がLoRAに弱いのかも?

普通のFineTuningをやろうとしたらOOM

from transformers import T5Tokenizer, AutoModelForCausalLM,TrainingArguments,Trainer

from torch.utils.data import Dataset

import os

os.environ["WANDB_DISABLED"] = "true"

model_name = "rinna/japanese-gpt-neox-3.6b"

class TextDataset(Dataset):

def __init__(self, txt_file, tokenizer):

self.tokenizer = tokenizer

with open(txt_file, 'r', encoding='utf-8') as f:

lines = f.read().split('\n')

self.lines = lines

def __getitem__(self, idx):

line = self.lines[idx]

encoding = self.tokenizer.encode_plus(

line,

truncation=True,

max_length=256,

padding='max_length',

return_tensors='pt'

)

encoding['input_ids'] = encoding['input_ids'].squeeze() # バッチ次元を除去

encoding['labels'] = encoding['input_ids'].clone() # ラベルとして入力IDを複製

return encoding

def __len__(self):

return len(self.lines)

pre_trained_model = AutoModelForCausalLM.from_pretrained(model_name)

tokenizer = T5Tokenizer.from_pretrained(model_name)

dataset = TextDataset("dataset_20230525.txt", tokenizer)

training_args = TrainingArguments(

output_dir="./results", # 出力先のディレクトリ

overwrite_output_dir=True, # 上書きするか

num_train_epochs=3, # 学習エポック数

per_device_train_batch_size=1, # 訓練のバッチサイズ

per_device_eval_batch_size=64, # 評価のバッチサイズ

warmup_steps=500, # 学習率スケジューラのウォームアップステップ数

weight_decay=0.005, # 重み減衰の強さ

logging_dir='./logs', # ログ保存フォルダ

fp16=True, # 半精度演算を有効にするか

gradient_accumulation_steps=2048, # 勾配の累積ステップ数

gradient_checkpointing=True, # チェックポイントを有効にするか

optim="adafactor", # 最適化手法 (adamw, adafactor)

)

trainer = Trainer(

model=pre_trained_model, # 学習させるモデル

args=training_args, # 学習設定

train_dataset=dataset # 訓練データ

)

trainer.train()

print("学習が終了しました")

trainer.save_model("./trained_model")

npakaさんがFinetuningのnote書いてたのでこれに従って行ってみる

回れば良いのでstepsは半減させたりした

これで回せた

# モデルの読み込み

import os

from peft.utils.config import TaskType

os.environ["CUDA_VISIBLE_DEVICES"]="0"

import torch

import torch.nn as nn

import bitsandbytes as bnb

from peft import LoraConfig, get_peft_model

import transformers

from datasets import load_dataset

# 基本パラメータ

model_name = "rinna/japanese-gpt-neox-3.6b"

# dataset = "kunishou/databricks-dolly-15k-ja"

is_dataset_local = False

dataset_file_path = "./20230528_sakura.json"

peft_name = "lora-sakura-3.6b"

output_dir = "lora-sakura-3.6b-results"

# トレーニング用パラメータ

eval_steps = 50 #200

save_steps = 100 #200

logging_steps = 50 #20

max_steps = 100 # dollyだと 4881

# データセットの準備

# data = load_dataset(dataset)

data = load_dataset("json",data_files=dataset_file_path)

CUTOFF_LEN = 512 # コンテキスト長の上限

# Rinnaのトークナイザーでは、「use_fast=False」も必要になる

tokenizer = transformers.AutoTokenizer.from_pretrained(model_name, use_fast=False)

def print_trainable_parameters(model):

"""

モデル内の学習可能なパラメータ数を出力

"""

trainable_params = 0

all_param = 0

for _, param in model.named_parameters():

all_param += param.numel()

if param.requires_grad:

trainable_params += param.numel()

print(

f"trainable params: {trainable_params} || all params: {all_param} || trainable%: {100 * trainable_params / all_param}"

)

model = transformers.AutoModelForCausalLM.from_pretrained(

model_name,

device_map='auto',

load_in_8bit=True,

)

model.enable_input_require_grads()

model.gradient_checkpointing_enable()

config = LoraConfig(

r=8,

lora_alpha=32,

lora_dropout=0.01,

inference_mode=False,

task_type=TaskType.CAUSAL_LM,

)

model = get_peft_model(model, config)

print_trainable_parameters(model)

# トークナイズ

def tokenize(prompt, tokenizer):

result = tokenizer(

prompt,

truncation=True,

max_length=CUTOFF_LEN,

padding=False,

)

return {

"input_ids": result["input_ids"],

"attention_mask": result["attention_mask"],

}

# プロンプトテンプレートの準備

def generate_prompt(data_point):

if data_point["input"]:

result = f"""### 指示:

{data_point["instruction"]}

### 入力:

{data_point["input"]}

### 回答:

{data_point["output"]}"""

else:

result = f"""### 指示:

{data_point["instruction"]}

### 回答:

{data_point["output"]}"""

# 改行→<NL>

result = result.replace('\n', '<NL>')

return result

VAL_SET_SIZE = 10

# 学習データと検証データの準備

train_val = data["train"].train_test_split(

test_size=VAL_SET_SIZE, shuffle=True, seed=42

)

train_data = train_val["train"]

val_data = train_val["test"]

train_data = train_data.shuffle().map(lambda x: tokenize(generate_prompt(x), tokenizer))

val_data = val_data.shuffle().map(lambda x: tokenize(generate_prompt(x), tokenizer))

trainer = transformers.Trainer(

model=model,

train_dataset=train_data,

eval_dataset=val_data,

args=transformers.TrainingArguments(

num_train_epochs=3,

learning_rate=3e-4,

logging_steps=logging_steps,

evaluation_strategy="steps",

save_strategy="steps",

max_steps=max_steps,

eval_steps=eval_steps,

save_steps=save_steps,

output_dir=output_dir,

report_to="none",

save_total_limit=3,

push_to_hub=False,

auto_find_batch_size=True

),

data_collator=transformers.DataCollatorForLanguageModeling(tokenizer, mlm=False),

)

model.config.use_cache = False # 警告を黙らせます。 推論のために再度有効にしてください。

trainer.train()

model.config.use_cache = True

# LoRAモデルの保存

trainer.model.save_pretrained(peft_name)

print("Done!")

データセットをdollyではなく自前の者に変更しLoRA