💪

RAGでwikiからデータを引っ張ってくる

僕らのヒーローアカデミアが完結したとしって、今更ながら読み出した。

Wiki情報を下にRAGで質問できるくんを作るくんのメモ

Setup

install

! pip install langchain langchain_community langchain_chroma langchain-openai wikipedia

import getpass

import os

from langchain_openai import ChatOpenAI

os.environ["LANGCHAIN_TRACING_V2"] = "true"

os.environ["LANGCHAIN_API_KEY"] = getpass.getpass("LANGCHAIN_API_KEY: ")

os.environ["OPENAI_API_KEY"] = getpass.getpass("OPENAI_API_KEY: ")

RAG実行

import bs4

from langchain import hub

from langchain_chroma import Chroma

from langchain_community.document_loaders import WebBaseLoader, WikipediaLoader

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnablePassthrough

from langchain_openai import OpenAIEmbeddings

from langchain_text_splitters import RecursiveCharacterTextSplitter

# setup llm

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0.1)

# load data from wiki

loader = WikipediaLoader(query="僕のヒーローアカデミア", lang="ja")

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200)

splits = text_splitter.split_documents(docs)

vectorstore = Chroma.from_documents(documents=splits, embedding=OpenAIEmbeddings())

# Retrieve and generate using the relevant snippets of the blog.

retriever = vectorstore.as_retriever()

prompt = hub.pull("rlm/rag-prompt")

def format_docs(docs):

return "\n\n".join(doc.page_content for doc in docs)

rag_chain = (

{"context": retriever | format_docs, "question": RunnablePassthrough()}

| prompt

| llm

| StrOutputParser()

)

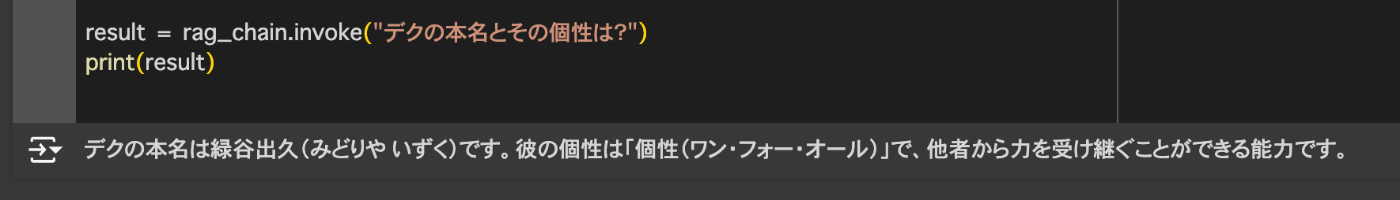

result = rag_chain.invoke("デクの本名とその個性は?")

print(result)

できた

Discussion