💭

任意の確率分布でベイズ分類機を作る

やりたいこと

scikit-learnのnaive_bayesにはいくつかのモデルが実装されていますが、それ以外の確率分布、特にKDEでフィットした経験分布を用いてベイズ分類機を作りたい。

やり方

基本的には以下の通りです。

-

C -

X

任意の形のfitで観測データからパラメータを推定し、pdfで確率(密度)を返します。

分布抽象クラスのAPI

from abc import ABC, abstractmethod

from typing import Self

from nptyping import Float, NDArray, Number, Shape

class Distribution(ABC):

@abstractmethod

def fit(self, X: NDArray[Shape["N, D"], Number]) -> Self:

pass

@abstractmethod

def pdf(self, X: NDArray[Shape["N, D"], Number]) -> NDArray[Shape["N, D"], Float]:

pass

分類機側の実装

上記Distributionを各クラスラベルごとにfitし、predict_probaでBayesの定理でクラス確率を計算します。predictではクラス確率のargmaxを取って最も確率が高いクラスを返します。

from typing import Callable, Self

import numpy as np

from nptyping import Float, Int, NDArray, Number, Shape

from sklearn.base import BaseEstimator, ClassifierMixin

from .distribution import Distribution

class AnyBayesClassifier(BaseEstimator, ClassifierMixin):

def __init__(self, distribution_factory: Callable[[], Distribution]):

self.distribution_factory = distribution_factory

self.dists_: list[Distribution] = []

self.n_classes_: int = 0

def fit(

self, X: NDArray[Shape["N, D"], Number], y: NDArray[Shape["N"], Int]

) -> Self:

self.n_classes_ = max(y) + 1

self.dists_ = []

for class_ in range(self.n_classes_):

mask = y == class_

x = X[mask]

dist = self.distribution_factory().fit(x)

self.dists_.append(dist)

return self

def predict_proba(

self,

X: NDArray[Shape["N, D"], Number],

class_weight: list[float] | float = 1.0,

) -> NDArray[Shape["N, C"], Float]:

if self.n_classes_ == 0:

raise RuntimeError("You must fit the model before predicting")

if isinstance(class_weight, float):

class_weights = [class_weight] * self.n_classes_

else:

if len(class_weight) != self.n_classes_:

raise ValueError(

f"Expected {self.n_classes_} class weights, got {len(class_weight)}"

)

class_weights = class_weight

probs = []

for w, dist in zip(class_weights, self.dists_):

probs.append(dist.pdf(X) * w)

p = np.asarray(probs, dtype=np.float64).T.copy()

return p / p.sum(axis=1, keepdims=True)

def predict(

self,

X: NDArray[Shape["N, D"], Number],

class_weight: list[float] | float = 1.0,

) -> NDArray[Shape["N"], Int]:

probs = self.predict_proba(X, class_weight=class_weight)

return np.argmax(probs, axis=1)

テスト

scikit-learnのKernel Density Estimation(KDE)を利用した経験分布で分類機を作ってみます。

from dataclasses import dataclass

from typing import Any, Literal, Self, TypeAlias

import numpy as np

from nptyping import Float, NDArray, Shape

from sklearn.neighbors import KernelDensity

from ..distribution import Distribution

BandwidthType: TypeAlias = float | Literal["scott", "silverman"]

KernelType: TypeAlias = Literal[

"gaussian", "tophat", "epanechnikov", "exponential", "linear", "cosine"

]

MetricType: TypeAlias = str

MetricParamsType: TypeAlias = dict[str, Any] | None

@dataclass

class SKLearnKDE(Distribution):

bandwidth: BandwidthType = "scott"

kernel: KernelType = "gaussian"

metric: MetricType = "euclidean"

metric_params: MetricParamsType = None

def fit(self, X: NDArray[Shape["N, D"], Float]) -> Self:

self.kde_ = KernelDensity(

bandwidth=self.bandwidth,

kernel=self.kernel,

metric=self.metric,

metric_params=self.metric_params,

).fit(X)

return self

def pdf(self, X: NDArray[Shape["N, D"], Float]) -> NDArray[Shape["N, D"], Float]:

return np.exp(self.kde_.score_samples(X))

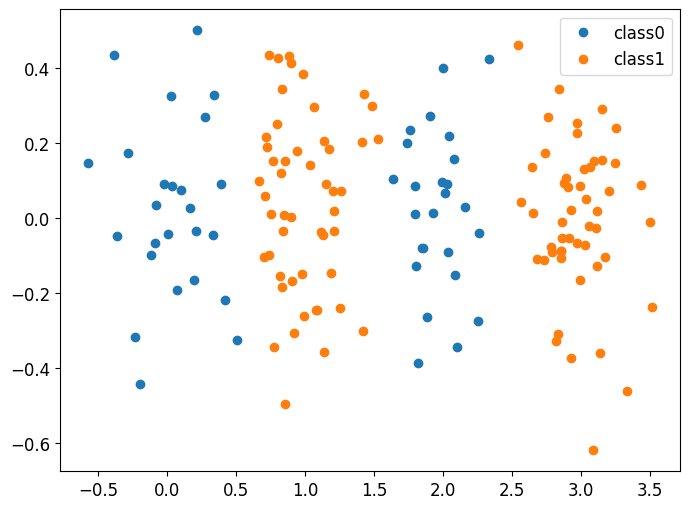

これを山が2つあるようなデータで試してみます。

import matplotlib.pyplot as plt

import numpy as np

np.random.seed(0)

m00 = np.array([0, 0])

m01 = np.array([2, 0])

m10 = np.array([1, 0])

m11 = np.array([3, 0])

s = 0.05

x00 = np.random.multivariate_normal(m00, np.eye(2)*s, 25)

x01 = np.random.multivariate_normal(m01, np.eye(2)*s, 25)

x0 = np.vstack((x00, x01))

x10 = np.random.multivariate_normal(m10, np.eye(2)*s, 50)

x11 = np.random.multivariate_normal(m11, np.eye(2)*s, 50)

x1 = np.vstack((x10, x11))

plt.plot(x0[:, 0], x0[:, 1], 'o', label='class0')

plt.plot(x1[:, 0], x1[:, 1], 'o', label='class1')

plt.legend()

まずはscikit-learnのGaussianNBを試してみます。

y0 = np.zeros(x0.shape[0])

y1 = np.ones(x1.shape[0])

X = np.vstack((x0, x1))

y = np.hstack((y0, y1)).astype(int)

from sklearn.naive_bayes import GaussianNB

model = GaussianNB().fit(X, y)

y_pred = model.predict(X)

print(classification_report(y, y_pred))

precision recall f1-score support

0 0.69 0.50 0.58 50

1 0.78 0.89 0.83 100

accuracy 0.76 150

macro avg 0.74 0.70 0.71 150

weighted avg 0.75 0.76 0.75 150

やはり複数の山があるとちょうど入れ替わっているあたりでうまく分類できず、訓練データに対してもスコアが伸びません。次にKDEでもやってみます。

from sklearn.metrics import classification_report

from anybayes import AnyBayesClassifier, IndependentSKLearnKDE

model = AnyBayesClassifier(distribution_factory=IndependentSKLearnKDE).fit(X, y)

y_pred = model.predict(X)

print(classification_report(y, y_pred))

precision recall f1-score support

0 0.98 1.00 0.99 50

1 1.00 0.99 0.99 100

accuracy 0.99 150

macro avg 0.99 0.99 0.99 150

weighted avg 0.99 0.99 0.99 150

訓練データに対する評価ですが、ばっちり分類できています!

今回実装したものはAnyBayesという名前でGitHubに公開しています。

Discussion